Biases and harmful content, in this project referred to as toxicity in media are no new phenomena, and AI-generated content is no exception. This project explored live streams of AI-generated parodies of popular TV shows Family Guy and Spongebob Squarepants. Using quantitative and qualitative methods, these AI-parodies have been examined in terms of the toxicity exhibited both in the streams and the live chat comments of viewers. The relationship between toxicity in stream and chat was examined, as well as the nature of the toxicity and the ways in which it manifested.

While there was no evidence found for a strong link between toxicity levels in stream and chat using the quantitative methods, the qualitative analysis yielded interesting insights. Toxicity in these AI-parodies mirrored the kinds of toxicity seen in the original shows. The community around AI Family Guy displayed similar kinds of bias and harmful stereotyping as the original Family Guy, while AI Spongebob Squarepants displayed far less problematic kinds of toxicity.

Research Questions

- How does toxicity develop over the course of AI-generated live streams?

- Does biased/harmful content appearing on screen invite toxicity in the live chat?

- Does toxicity in the live chat lead to more biased/harmful prompts being submitted to the

AI? - Do these two phenomena lead to a feedback loop of toxicity and harmful content?

- What form does toxicity take in AI-generated live streams?

- Which topics discussed within the live chat trigger more toxic reactions?

- Which types of toxicity appear more often than others?

- How does the source material affect the toxicity in the AI versions?

Methodology

First, the YouTube channels selected for this project ai_peter and AI Sponge Rehydrated. The latter of the two has since been taken down due to claims of copyright infringement, but ai_peter remains operational at the time of writing. Live streams from these two channels were then scraped for their automatically generated subtitles to analyse the streams' contents, alongside the transcripts of the live chat. Both of these were then processed line by line through the Perspective API. This API rated each subtitle line and chat comment on a scale from 0 to 1, depending on how toxic it appeared to be.

These data points were then used to compare the toxicity levels between streams and chats, identifying peaks where toxicity would suddenly spike up and then examining those sections in closer detail. 1-minute intervals following these peaks were analyzed through a human eye, examining what people were saying, how they were saying it and what topics were most frequently discussed.

Results

Results were twofold: The quantitative analysis did not yield strong enough evidence for a link between toxicity in stream and chat. Further refinement of the methods may be required.

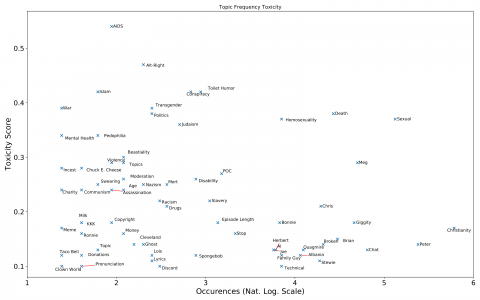

The qualitative analysis however did produce some insights: The toxicity AI Family Guy stream more often than not took the form of jokes targeted at marginalized groups, or at least making fun of them. Characters from the show that belonged to marginalized groups such as Meg (women) or Mort (Jews) were associated with far higher levels of toxicity than characters like Peter or Quagmire (heterosexual white men).

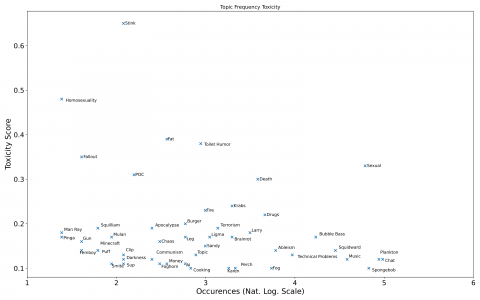

The same could not be said for AI Spongebob Squarepants though. Toxicity in this community mostly took the form of juvenile toilet humor or sexual jokes. No one character seemed to receive disproportionate levels of toxicity, regardless of their demographic status.